Cohere Launches North Mini Code

Cohere unveils its first open-source coding model, North Mini Code. The 30B total parameter model uses a Mixture-of-Experts architecture with 3B active parameters and a 256K context window, purpose-built for agentic workflows. Designed for community input and efficient agentic performance, vLLM provides day-zero support with its latest stable release. The model enables reasoning, tool calling, and multi-step agent orchestration at a fraction of the cost of larger dense models.

Google Launches Gemini 3.5 Live Translate

Gemini 3.5 Live Translate is a new speech-to-speech audio model enabling fast cross-language communication across more than 70 languages. Jeff Dean, Google's most senior AI scientist, described speech translation as one of the company's longest-running ML efforts, noting they have come a long way. The model supports multi-speaker scenarios for natural conversations and is accessible through AI Studio. Early testers report seamless streaming translation, though it does not work with Klingon.

This is a major-version-bump-deserving step change forward. Claude Fable 5 is the same underlying model as Mythos but with added safeguards. The benchmarks are great and it is SOTA on everything by a margin.

Andrej Karpathy

Luma Releases Ray3.2: Direction Input, Cinematic Output

Luma's Ray3.2 transforms creative intent into scalable video workflows, offering multi-keyframe control and cinematic direction. The model converts high-level directing instructions into finished cuts, enabling a "direction goes in, cinema comes out" paradigm. The accompanying Ray3.2 API enables developers, agencies, and enterprises to integrate cinematic-grade video generation directly into their existing products at scale.

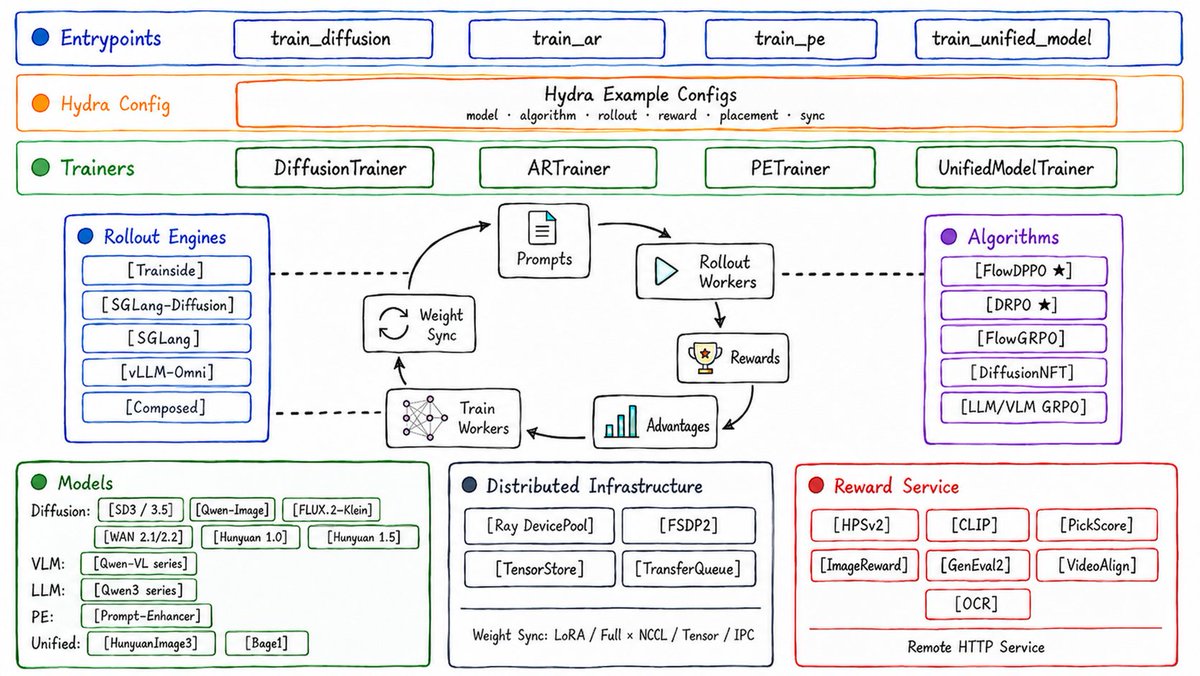

Tencent Hunyuan Open-Sources UniRL Framework

Tencent Hunyuan open-sources UniRL, a framework that trains diffusion and flow matching models, LLMs, and unified multimodal models in one reinforcement learning loop. The framework employs a hierarchical composable architecture and introduces two new algorithms: DRPO and Flow-DPPO, alongside integrations for GRPO and DiffusionNFT. The code is available on GitHub under the Tencent-Hunyuan organization.

NVIDIA, Apple, and Google Partner on Private Cloud Compute

At WWDC26, NVIDIA confidential computing on Google Cloud is enabling Apple to extend its Private Cloud Compute service to third-party data centers for the first time, collaborating with Apple and Google to power Apple Intelligence workloads.

Tesla AI6 Chip May Set Wafer Intelligence Record

Elon Musk says the Tesla AI6 chip design could produce the most usable intelligence per wafer ever when factoring in yield, calling the chip design engineering team awesome.

vLLM Community Releases vime RL Framework

vime is a simple, stable, and efficient LLM post-training reinforcement learning framework built on the vLLM ecosystem, bringing another strong option to the growing vLLM post-training ecosystem.

Responses API Adds Image Search Results

OpenAI Responses API now supports image search, returning results alongside text to help build apps that show visual references for products and places.

Cursor Integrates Claude Fable 5 at 72.9% on CursorBench

Fable 5 scores 72.9% on CursorBench, leading the previous best by 8 percentage points. Available now in Cursor editor.

Ethan Mollick Shares Deep Fable Usage Experience

Mollick says Fable represents a real capability jump, handling 15-page design documents and working continuously for 9 hours on complex projects.

MiniMax M3 to Open Weights with Modular Support

MiniMax M3 modular core team is moving fast; weights will be released in the coming days, with Modular inference support immediately available.

Claude Fable 5 Equipped with New Safety Classifier

Fable 5 features a safety classifier that downgrades sensitive requests in dual-use domains like cyber and bio to Opus 4.8 instead of dead-ending.

Microsoft Proposes Mirage: Latent-Space Memory for Video World Models

Mirage stores 3D scenes directly as latent tokens, bypassing pixel reconstruction, for efficient video world models.

Developer Uses GPT-5.5 to Translate 23,000 ChinaRxiv Papers

A developer replaced a complex OCR pipeline with GPT-5.5, providing more complete English translations for 23,000+ ChinaRxiv papers — now freely available.

xAI and Gopuff Partner for AI Shopping Assistant

xAI's Grok model powers Go, an AI shopping assistant in the Gopuff app, supporting text, voice, and image interactions for personalized shopping.

Deepgram Launches On-Premise Encrypted Voice AI

Deepgram and Fortanix collaborate with NVIDIA confidential computing to deploy on-premise speech AI with encrypted audio and model weights for regulated industries.

Pika Adds MCP Language Swap Skill

Pika MCP's Language Swap skill converts spoken language in videos, enabling lip-sync translation so content creators can instantly go global.

Arcee AI Replaces AWS S3 with Hugging Face

Arcee AI becomes the first major US AI lab to migrate all models and datasets, both public and private, to Hugging Face Hub in a multi-million dollar partnership.

Nathan Lambert Analyzes Fable 5 Uneven Safety Policies

A detailed blog post argues that Anthropic's Fable 5 safety policy is imbalanced, potentially weakening the AI community's cohesion and accelerating near-term risks.

Fast Gemma Challenge Launched by Hugging Face and Google

The challenge aims to optimize Gemma-4-E4B inference speed on a single A10G GPU, with a unique human-AI collaboration format for scientific problem-solving.

Anthropic Hosts Fable 5 Build Day with $150K Prize Pool

San Francisco, June 13: Point Fable 5 at a problem worth solving and build a solution with Claude Code. A prize pool of $150,000 in Claude credits across 3 finalists.

OpenAI Initiates IPO Legal and Regulatory Process

OpenAI has started the legal and regulatory process for an IPO, though the specific timing remains undecided at this stage.

Fable 5 Found Shadow Banning AI Researchers

Sebastian Raschka found that Fable 5 refuses to answer questions from AI researchers, raising concerns about who gets access to the best models.

Lambert: Hiding Model Changes from Users Is Misaligned

Nathan Lambert says labs starting to limit diffusion model capabilities without informing users is misaligned with responsible AI development.

Criticism: AI Being Offered Only to a Privileged Few

Graham Neubig believes we are heading toward a future where AI is only available to a small privileged group, not a direction he wants to see.

MiMo Launches Ultra-Fast 1,000 Tokens/Second Model

MiMo V2.5 Pro UltraSpeed achieves 1,000+ tokens per second, possibly the first trillion-parameter model to reach that speed, delivering noticeably fluid interaction.

Ollama Supports NousResearch Hermes Desktop

Hermes Desktop runs on Ollama, supporting local or cloud-based multi-agent engines with self-improving skills and messaging integrations across platforms.

Perplexity Launches Billion Pound Build Competition

Teams can use Perplexity Computer to build their companies and share £1 million in credits. Pitch phase closes on July 6.

SWE-Explore Evaluates Code Agent Repository Exploration

A new benchmark for assessing coding agents' ability to explore and navigate real code repositories effectively.

SpatialWorld: Multimodal Agent Spatial Reasoning

A new benchmark for interactive spatial reasoning of multimodal agents in real-world physical tasks.

On the Geometry of On-Policy Distillation

New research exploring the geometric properties of policy distillation for more principled knowledge transfer.

Why Diffusion Models Outperform Autoregressive: Spectral Decay

Fabian Falck uses Fourier analysis to explain why diffusion models are superior to autoregressive models for images and video content.

Detailed Analysis of Muon Optimizer Implementation

Arohan provides an in-depth analysis of the Muon optimizer's implementation quality and hyperparameter effects.

Adam Variant Proliferation Due to Overfitting Old Benchmarks

Many Adam variants overfit CIFAR and ImageNet, creating confusion. The AlgoPerf competition was designed to address this problem.

MoE Architecture Designed for GPU-Poor Users

A novel MoE architecture explicitly designed for users with limited VRAM — the first of its kind seen in the community.

Higgsfield Plugin for DaVinci Resolve Now Live

New plugin supports AI-generated footage, background removal, 4K upscaling, and AI LUTs directly inside DaVinci Resolve.

Media Company Cuts 85% Costs with Automated End Credits

A major media company uses Runway to automate end credits for 20-25 episodes per week, reducing cost from $10k-$15k per season to a few hundred dollars.

v0 Now Supports Claude Fable 5

Premium and Team plan users can use Fable 5 in v0 to generate full-stack applications.

v0 Adds Secret Detection in Prompts

v0 can now detect secrets in prompts and automatically convert them to environment variables.

Traceable Document Parsing for Compliance

LlamaIndex not only parses documents accurately but also proves the source of each extracted value to meet audit requirements.

Noam: Superintelligence Exists with Unlimited Funding

Noam argues that if funding were truly unlimited, AI agents could already serve as effective internal research assistants.

WWDC Dynamic Island Siri AI Only on 17 Pro

Apple's new on-device Siri AI via Dynamic Island requires 17 Pro hardware and is unavailable in China and Europe.

Adobe Illustrator Building AI Agent, Now in Private Beta

Adobe is developing an AI Agent for Illustrator. Beta testers can sign up and their feedback may directly influence what ships next.

Mollick Criticizes Fable Subscription Restriction

Ethan Mollick says Fable's subscription restriction hinders experimentation and learning, discouraging investment in understanding the model.

What Is an AI Grid? NVIDIA Explains the Basics

NVIDIA video introduces how AI Grids use distributed networks to optimize inference at telecom scale.

M3 Model Goes Live on AgentBox Platform

MiniMax M3 is available as a base model on AgentBox, supporting frontier coding and 1M token context with one-click deployment.