xAI Opens Colossus Supercomputer to Anthropic for Claude

In an extraordinary partnership between frontier labs, xAI grants Anthropic access to one of the world's largest AI supercomputers, with 220,000+ Nvidia GPUs now powering Claude.

xAI announced a landmark compute partnership on Tuesday, granting Anthropic access to Colossus 1 — one of the world's largest and fastest-deployed AI supercomputers — to provide additional training and inference capacity for the Claude family of models. The deal, powered by more than 220,000 Nvidia GPUs, marks an unusual collaboration between two frontier AI labs that are otherwise competitors. xAI itself develops Grok, a multimodal chatbot with voice, image generation, and real-time search capabilities. The partnership significantly expands Claude's available compute at a moment when Anthropic is rapidly scaling both its API traffic and consumer products.

OpenAI, AMD, Nvidia Launch Multipath Reliable Connection Protocol

Six industry giants release MRC, an open-source networking protocol designed to reduce GPU idle time in massive AI training clusters.

OpenAI, alongside AMD, Broadcom, Intel, Microsoft, and Nvidia, has released Multipath Reliable Connection (MRC) — a new open networking protocol purpose-built for AI supercomputers. The protocol helps large training clusters run faster and more reliably by keeping thousands of GPUs synchronized across record numbers of chips, reducing wasted GPU time that can cost millions at scale. The move to open-source the protocol mirrors a broader industry trend of collaborative infrastructure development, where even competitors share the foundational layers that make frontier AI possible. MRC addresses a growing bottleneck: as clusters scale to hundreds of thousands of accelerators, traditional network protocols struggle to maintain synchronization without significant overhead.

"I usually avoid commenting too much on industry deals, but this one is fascinating. It certainly seems like a blow to the idea that Grok will remain a frontier model."

— Ethan Mollick, on the xAI–Anthropic partnership

Claude Managed Agents Adds Multi-Agent Orchestration and Self-Learning

Anthropic launched major new capabilities in Claude Managed Agents, including multi-agent orchestration that coordinates multiple Claude instances to solve complex tasks, an outcomes loop for rubric-driven self-improvement, and a feature called Dreaming that enables self-learning through autonomous exploration. The update also includes webhook support, allowing agents to integrate directly with external services. The release positions Claude as a platform for building autonomous agentic workflows that can iterate, learn, and collaborate without constant human supervision.

Anthropic Doubles Claude Code Limits, Removes Peak-Hour Throttling

Effective immediately, Anthropic doubled the 5-hour rolling limit for Claude Code across Pro, Max, Team, and seat-based Enterprise plans. The company also removed peak-hour limit reductions that previously throttled Pro and Max users during high-traffic periods. Additionally, API rate limits for Opus models were substantially raised. The changes come alongside the concurrent announcement of new compute capacity through the xAI partnership, signaling that Anthropic is rapidly expanding infrastructure to meet surging developer demand for coding agents and autonomous tool use. API traffic has grown 17x year-over-year, according to data shared at the Code w/ Claude event.

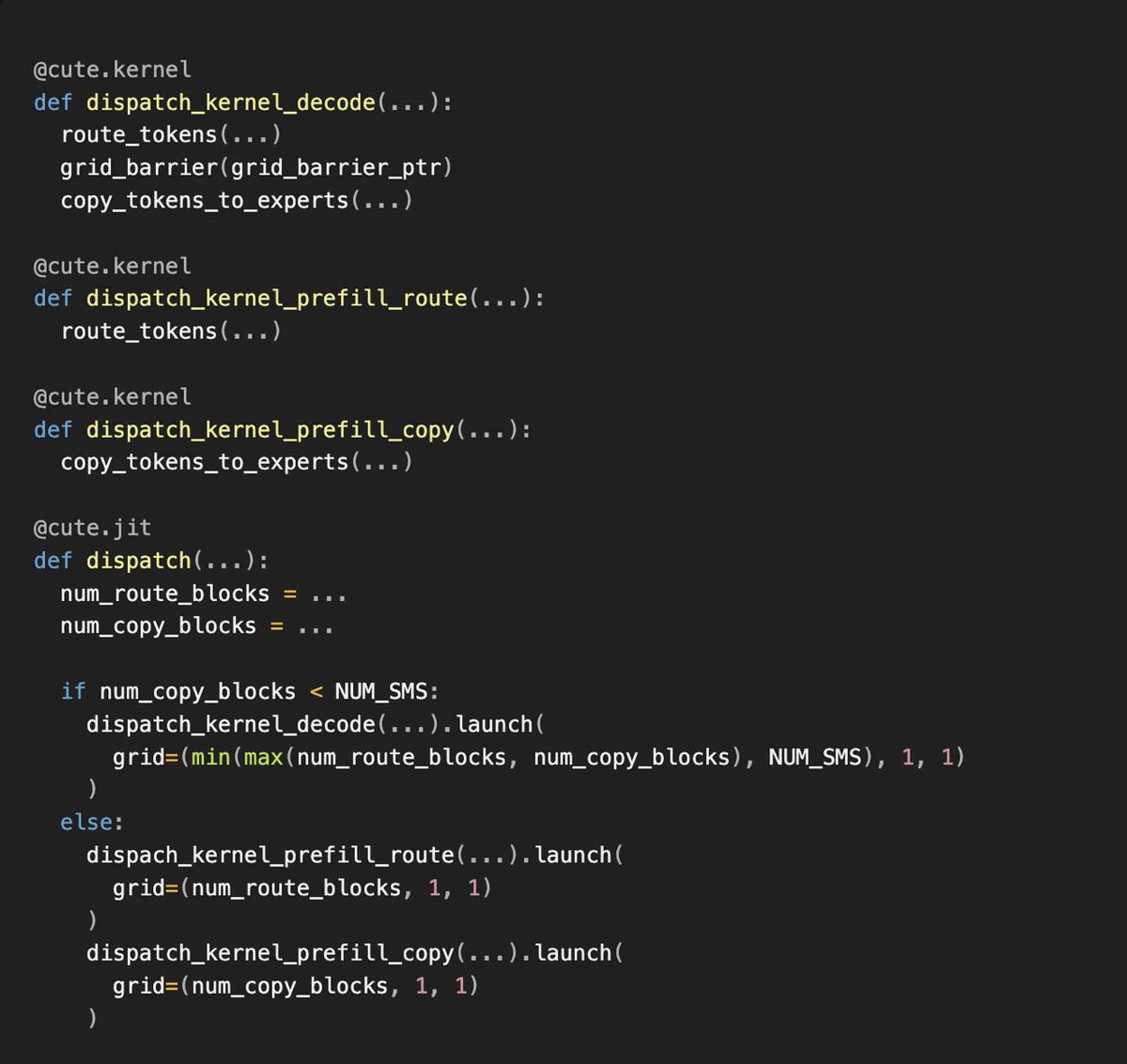

Perplexity Builds Proprietary ROSE Inference Engine

Perplexity has developed its own inference engine called ROSE (Runtime-Optimized Serving Engine), capable of serving models from embeddings to trillion-parameter LLMs. The engine integrates CuTeDSL to accelerate specialized GPU kernel building, giving Perplexity fine-grained control over inference performance. This vertical integration move brings Perplexity closer to frontier labs that control the full stack.

Google DeepMind Partners with EVE Online for Agent Research

Google DeepMind is partnering with the developers of EVE Online to use the game's complex, player-driven universe as a safe sandbox for testing AI agents on memory, continual learning, and long-term planning. The massively multiplayer game provides a rich environment where agents must navigate dynamic economies, social dynamics, and evolving constraints.

Tencent Hunyuan Hy3 Tops OpenRouter Weekly Chart

3.66 trillion tokens processed with 298% weekly growth.

Two weeks after release, Tencent's Hunyuan Hy3 Preview has claimed the top spot on OpenRouter's weekly leaderboard, processing 3.66 trillion tokens — a 298% increase week-over-week. The model ranked first in overall usage, tool calls, and coding, capturing a 15.4% market share across all providers. Top apps running Hy3 include Hermes Agent and Claude Code, underscoring strong demand for agentic workloads.

Luma Launches Uni-1.1 API, Reasons via Briefs Not Tokens

Luma released the Uni-1.1 API, which reasons by understanding creative briefs rather than merely processing tokens, generating cinema-quality results across fashion, architecture, and comics — without requiring middleware or prompt engineering. The API targets production pipelines where first-pass quality must be deliverable immediately.

Cursor Bootstraps Composer with Auto-Install for RL Training

Cursor uses earlier generations of its Composer model to automatically set up dev environments for reinforcement learning, allowing each new generation to focus on solving harder problems rather than infrastructure.

GPT-5.5 Instant Becomes ChatGPT Default Model

OpenAI updated GPT-5.5 Instant, making it the ChatGPT default. The model significantly reduces hallucination rates in law, finance, and medicine, while improving image understanding and document parsing.

Nvidia and ServiceNow Deliver Enterprise Autonomous AI Agents

Nvidia partnered with ServiceNow to deploy AI agents that act autonomously within enterprise workflows with built-in governance, auditability, and secure execution. ServiceNow introduced Project Arc, a long-running desktop agent at its Knowledge 2026 conference.

vLLM Integrates Mooncake for Agentic Workload Serving

vLLM partnered with Mooncake to serve agentic workloads at scale. Agentic traces grow to 80K+ tokens with 94%+ reusable prefixes. By integrating Mooncake Store as a distributed KV cache, vLLM solves cross-instance routing misses that previously evicted reusable context.

OBLIQ-Bench: The Most Ambitious IR Benchmark Yet

Diane released OBLIQ-Bench, described as the most ambitious information retrieval benchmark to date. Early results show no long-context LLM works past 200K tokens on its natural retrieval tasks, exposing a critical ceiling. LightOn's 0.1B parameter late-interaction model beats far larger dense models, though scores remain at 8% nDCG@10 with room to 91%.

Microsoft Gaia2: Dynamic Agent Benchmarks

Microsoft Research introduced Gaia2, an LLM agent evaluation benchmark focused on dynamic, asynchronous real-world environments. Unlike static evaluations, Gaia2 introduces noise, evolving constraints, temporal pressure, and multi-agent coordination. GPT-5 scored highest at 42% pass@1, though it struggled on time-sensitive tasks.

Engineering Lead Runs Hundreds of Agents from His Phone

Anthropic engineering head Boris Cherny revealed he does most of his work from a phone, with 5 to 10 active Claude sessions running hundreds of agents. Thousands run deep tasks overnight. He uses a cron-based method called Loop to schedule agents every minute, hour, or day.

Zyphra Releases ZAYA1-8B Reasoning MoE

Zyphra released ZAYA1-8B, a reasoning model built on the DSMoE-MLA++ architecture, combining high-end RL with test-time scaling. The release was praised as frontier open-source work, with an 80B model reportedly upcoming. RLVE environments were adopted in its training pipeline.

Hugging Face Launches Open-Source Robot App Store

Hugging Face launched an open-source app store for the Reachy Mini robot, featuring over 200 applications. The platform aims to lower the barrier to robotics development, allowing users to add functionality as easily as downloading phone apps.

SVGS: Spatially Varying Colors Enhance Gaussian Splatting

SVGS introduces spatially varying color and opacity functions per Gaussian primitive, replacing single-color representations. All variants outperform baselines on novel view synthesis, with the shiftable kernel variant achieving best results across datasets.

Perplexity Agent API Adds Finance Search

Perplexity launched Finance Search in its Agent API, enabling developers to retrieve licensed financial datasets, real-time market data, and cited web sources in a single tool call. The feature targets agents that need current, verifiable financial answers.

Cursor 3.3 Visualizes Agent Context Usage

Cursor 3.3 introduces a context usage breakdown for agents, helping developers diagnose context issues and optimize setups across rules, skills, MCPs, and subagents. The feature surfaces exactly where token budgets are being consumed.

vLLM Integrates LightSeek MLA for Agentic Workloads

vLLM became the exclusive day-0 launch partner for LightSeek's Tokenspeed project, integrating an MLA library optimized for long-context, multi-turn agentic workloads on Kimi 2.5/2.6 and DeepSeek R1.

MiMo 2.5 Pro and GLM 5.1 Surpass DeepSeek and Kimi

In the latest benchmarks, MiMo 2.5 Pro and GLM 5.1 outperformed DeepSeek and Kimi by significant margins. On one evaluation, GLM 5.1 scored 58.1, MiMo 2.5 Pro 66.4, and GPT 5.5 77.8, while DeepSeek V4-Pro and V4-Flash remained nearly identical.

Luma Agents Auto-Generate Targeted Ad Variations

Luma launched creative agent features that generate targeted ads by defining audiences and setting content variations. The agents handle planning, generation, iteration, and optimization across the creative pipeline.

Bun's GitHub Bot Contributes More Than Its Creator

At Code w/ Claude, the Bun team revealed that robobun, their automated GitHub bot, has now made more contributions to the Bun project than founder Jarred Sumner himself.